Responsible AI governance in app development platforms describes a systematic approach using policies and controls to maintain ethical, transparent, and secure AI behavior. It governs how models are designed, deployed, and monitored. Why is this critical? Because unmanaged AI systems can introduce bias, security risks, and regulatory violations across digital applications.

Key Takeaways

- Responsible ai governance in app development platforms ensures ethical and compliant AI use

- Transparency and accountability are foundational strategies

- Governance frameworks vary across industries but share core principles

- Centralized ownership improves effectiveness and reduces risk

- Balancing innovation and control is a key governance challenge

How does responsible AI governance apply to app development platforms?

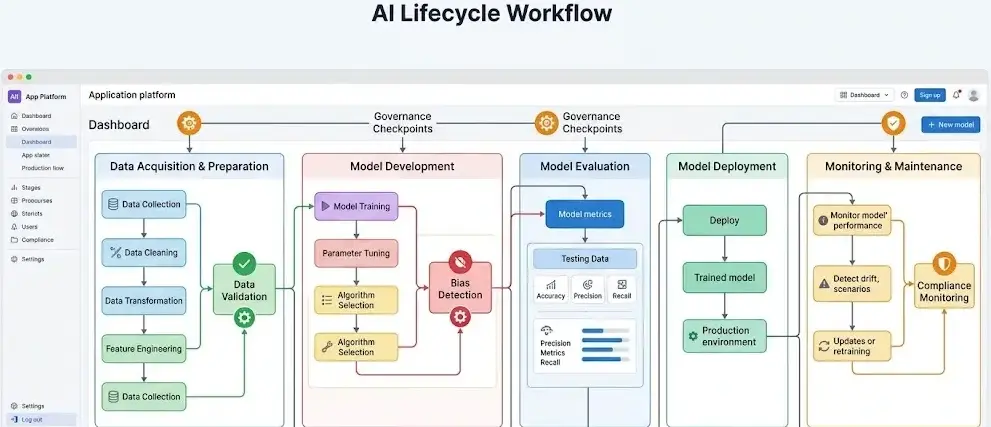

Responsible ai governance in app development platforms is a formal system that ensures AI models follow ethical, legal, and operational standards throughout their lifecycle.

Core components include:

- Policy frameworks: Define acceptable AI use

- Risk management: Identify and mitigate bias or misuse

- Compliance controls: Align with regulations such as GDPR and industry standards

- Auditability: Maintain logs for transparency and review

Example: A fintech app implements governance rules to detect bias in loan approval algorithms and logs decisions for audit.

Which two strategies are important for ensuring responsible and ethical use of AI?

Responsible ai governance in app development platforms relies heavily on two key strategies:

- Transparency and explainability

- Clear model decisions

- User-facing explanations

- Documentation of data sources

- Accountability and oversight

- Defined ownership of AI outcomes

- Regular audits and reviews

- Human-in-the-loop validation

These strategies reduce risks and improve trust in AI-powered applications.

What are practical examples of responsible ai governance in app development platforms?

Responsible ai governance in app development platforms can be observed across industries:

| Industry | Governance Application Example |

| Healthcare | Validating diagnostic models to avoid bias |

| Finance | Monitoring fraud detection systems for fairness |

| E-commerce | Regulating recommendation engines for transparency |

| Public sector | Ensuring fair allocation of social services |

A related concept often discussed alongside this topic includes AI risk management, model monitoring, and algorithmic accountability frameworks.

What is AI governance and its primary objective in public policy?

Responsible ai governance in app development platforms extends into public policy where the primary objective is:

- Protecting citizens from harmful AI outcomes

- Ensuring fairness and non-discrimination

- Promoting transparency in automated decisions

Governments use governance frameworks to regulate high-risk AI applications such as surveillance, healthcare diagnostics, and financial systems.

What does accountability mean in AI systems used in governance?

Responsible ai governance in app development platforms requires accountability, meaning:

- Every AI decision must be traceable

- Responsible individuals or teams must be clearly assigned

- Systems must allow audit and correction mechanisms

Example: If an AI system denies a service request, the organization must explain the reason and provide recourse.

What is a critical consequence of distributing AI governance responsibility across too many roles?

Responsible ai governance in app development platforms becomes ineffective when accountability is fragmented.

Key consequence:

- Lack of accountability creates delays and risk exposure; best practice is to implement clear ownership structures.

Best practice:

- Establish a central governance authority with defined responsibilities

- Support with cross-functional collaboration, not dilution of ownership

What is the key trade-off faced in AI governance?

Responsible ai governance in app development platforms often balances:

- Innovation vs. control

| Factor | Governance Impact |

| Strict controls | Higher compliance, slower innovation |

| Flexible policies | Faster deployment, higher risk |

Organizations must align governance levels with application sensitivity and regulatory requirements.

Conclusion

Responsible ai governance in app development platforms ensures structured, accountable, and compliant AI deployment across industries. A clear governance model reduces risks while maintaining operational efficiency. As adoption grows, integrating governance with evolving practices such as role of ai in web and app development will remain essential for sustainable and secure innovation.

FAQs

What is AI governance?

It is a formal framework that ensures AI systems operate responsibly, securely, and in line with regulations.

Which two strategies ensure responsible AI use?

Transparency in decision-making and accountability through oversight are the two most critical strategies.

What is the primary objective of AI governance in public policy?

Its main goal is to protect users, ensure fairness, and regulate high-risk AI applications.

What does accountability in AI systems mean?

It means AI decisions are traceable and assigned to responsible individuals or teams for oversight.

What is a key risk of poor governance structure?

Distributing responsibility across too many roles can lead to unclear ownership and compliance failures.

Sources

https://star.global/posts/why-responsible-ai-governance-is-important/

https://bigid.com/blog/what-is-ai-governance/

https://katharostechie.in/ai-governance-platforms-ensuring-ethical-and-responsible-ai

https://cogentinfo.com/resources/ai-governance-platforms-ensuring-ethical-ai-implementation

https://www.microsoft.com/en-us/ai/responsible-ai

https://nasscom.in/ai/responsible-ai/ai-governance-framework.html

https://mlconference.ai/generative-ai-content/responsible-ai-api-governance/

https://www.ibm.com/think/topics/responsible-ai

https://indatalabs.com/blog/artificial-intelligence-governance

https://www.pega.com/responsible-ai