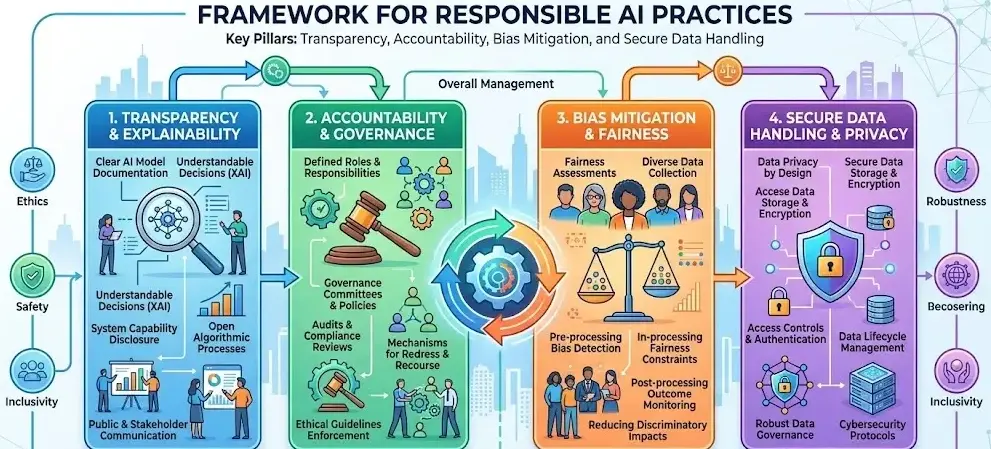

Responsible ai practices in app development refer to the implementation of ethical, transparent, and accountable methods when designing and deploying intelligent application features. These practices ensure that systems operate reliably, respect user rights, and comply with regulatory standards. How can organizations ensure that intelligent systems remain fair, secure, and auditable at scale?

Key Takeaways

- Responsible ai practices ensure fairness, transparency, and accountability

- Governance frameworks are essential for oversight and compliance

- Regulatory standards guide implementation across industries

- Continuous monitoring helps mitigate risks and biases

- Practical applications span healthcare, finance, and retail sectors

What are responsible ai practices in app development and why are they important?

Responsible ai practices in app development establish safeguards that ensure systems behave predictably and ethically.

Key objectives include:

- Ensuring fairness in automated decisions

- Maintaining data privacy and security

- Enabling transparency and explainability

- Supporting regulatory compliance

Example:

A financial application using credit scoring models must prevent bias against demographic groups and provide explainable outcomes.

What principles define responsible ai practices in app development?

Responsible ai practices in app development are built on widely accepted principles used across industries.

| Principle | Description |

| Fairness | Avoids discrimination in outputs |

| Accountability | Assigns responsibility for system outcomes |

| Transparency | Ensures decisions can be understood |

| Privacy | Protects user data and sensitive information |

| Security | Prevents misuse and unauthorized access |

Industry Practice:

Organizations implement bias detection tools and conduct fairness audits during model validation.

How is responsible ai governance applied in app development platforms?

Responsible AI governance in app development platforms

Responsible ai practices in app development rely heavily on governance frameworks to ensure consistent oversight.

Core governance components:

- Policy frameworks: Define acceptable system behavior

- Risk assessments: Identify potential ethical or operational risks

- Audit mechanisms: Track decisions and system outputs

- Compliance checks: Align with standards like GDPR and ISO/IEC 27001

Example Workflow:

- Define governance policies

- Conduct risk classification

- Implement monitoring tools

- Perform periodic audits

What are common challenges in implementing responsible ai practices in app development?

Responsible ai practices in app development face several operational and technical challenges.

Key challenges:

- Data bias and incomplete datasets

- Lack of explainability in complex models

- Integration with legacy systems

- Evolving regulatory requirements

Mitigation strategies:

- Use diverse and representative datasets

- Apply explainable model techniques

- Establish continuous monitoring systems

How do regulatory standards support responsible ai practices in app development?

Responsible ai practices in app development are aligned with global regulatory frameworks.

Common standards:

- GDPR: Data protection and user consent

- HIPAA: Healthcare data security

- ISO/IEC 27001: Information security management

Example:

A healthcare application must comply with HIPAA by ensuring encrypted data storage and controlled access.

What are practical examples of responsible ai practices in app development?

Responsible ai practices in app development are implemented across various industries.

Examples:

- Healthcare apps: Diagnostic support systems with audit trails

- Retail apps: Personalized recommendations with user consent

- Finance apps: Fraud detection with explainable alerts

A responsible ai practices in app development pdf is often referenced alongside ethical ai frameworks, compliance guidelines, and model risk management documentation.

Conclusion

Responsible ai practices in app development provide a structured framework for building reliable, compliant, and transparent systems. Organizations that integrate governance, fairness, and accountability can ensure long-term system integrity. For broader context, understanding the limitations of ai in app development helps refine these practices and address real-world constraints effectively.

FAQs

What are responsible ai practices in app development?

They are structured methods ensuring fairness, transparency, and compliance in intelligent application systems.

Why are responsible ai practices important?

They reduce risks such as bias, data misuse, and regulatory violations.

Is governance a core part of responsible ai practices?

Yes, governance ensures oversight, accountability, and compliance throughout the development lifecycle.

What industries require responsible ai practices the most?

Healthcare, finance, and retail rely heavily on these practices due to sensitive data and decision-making impact.

How can developers implement responsible ai practices effectively?

By using ethical guidelines, conducting audits, and aligning with regulatory standards.

Sources

https://www.sap.com/slovenia/resources/what-is-responsible-ai

https://inapp.com/blog/ethical-considerations-in-ai-development-importance-of-responsible-ai-practices/

https://www.cometchat.com/blog/15-responsible-ai-practices

https://www.microsoft.com/en-us/ai/responsible-ai

https://www.ibm.com/think/topics/responsible-ai

https://hightechinstitute.nl/cultivating-responsible-ai-practices-in-software-development/

https://rootstack.com/en/blog/responsible-software-development-ai-rootstack-best-practices

https://medium.com/@techwave/ai-ethics-in-application-development-key-considerations-for-responsible-innovation-fa1f49b8f12b

https://www.calls9.com/blogs/responsible-ai-why-it-matters-and-how-to-get-it-right